Robots.txt Generator

Create a valid robots.txt file to control which pages Google and Bing can crawl and index on your site.

Crawl rules (User-agent: *)

Adds rules to block GPTBot, ClaudeBot, Google-Extended, PerplexityBot, Bytespider, CCBot from scraping your content for AI model training.

Generated robots.txt

User-agent: * Allow: /

Common robots.txt Examples

Ready-to-use configurations for the most common website setups.

Allow everything (default)

Open to all crawlers. Standard for public websites.

User-agent: * Allow: /

Block admin and private areas

Allow public crawling but protect backend paths.

User-agent: * Disallow: /admin Disallow: /wp-admin Disallow: /private Sitemap: https://example.com/sitemap.xml

Block AI training crawlers

Allow search engines but opt out of AI model training.

User-agent: * Allow: / User-agent: GPTBot Disallow: / User-agent: ClaudeBot Disallow: / User-agent: Google-Extended Disallow: /

Block all crawlers

Completely private site - no bot crawling of any kind.

User-agent: * Disallow: /

What is a robots.txt File?

A robots.txt file is a plain text file placed at the root of your website (yourdomain.com/robots.txt) that instructs search engine crawlers which pages they can and cannot access. It follows the Robots Exclusion Protocol, a standard recognized by Google, Bing, and all major search engines.

While robots.txt is not a security tool and cannot prevent determined bots from accessing your site, it is an important part of technical SEO. Properly configured, it prevents crawl budget from being wasted on admin panels, duplicate content, and internal search result pages, keeping Google focused on the content you actually want indexed.

Protect admin areas

Disallow paths like /admin, /wp-admin, and /dashboard to keep backend pages out of search results and reduce unnecessary crawling.

Add your sitemap

Including your sitemap URL in robots.txt helps crawlers discover your sitemap automatically without relying on Google Search Console submission.

Do not block CSS or JS

Google needs to render your pages to understand them. Blocking stylesheets or JavaScript files can prevent proper indexing and hurt your rankings.

Test before deploying

Use Google Search Console's robots.txt tester to verify your file before going live. A misconfigured file can accidentally block your entire site from Google.

Frequently Asked Questions

Common questions about robots.txt and search engine crawler directives.

Related Tools

More free seo tools tools

URL Slug Generator

Convert any title or phrase into a clean, SEO-friendly URL slug with lowercase formatting and hyphen separation.

SEO Checklist

Work through a step-by-step SEO checklist covering technical, on-page, content, and link building essentials.

SERP Preview Tool

See exactly how your title tag and meta description appear in Google search results before you publish.

Meta Tag Generator

Generate complete HTML meta tags for any webpage including title, description, Open Graph, and Twitter Card tags.

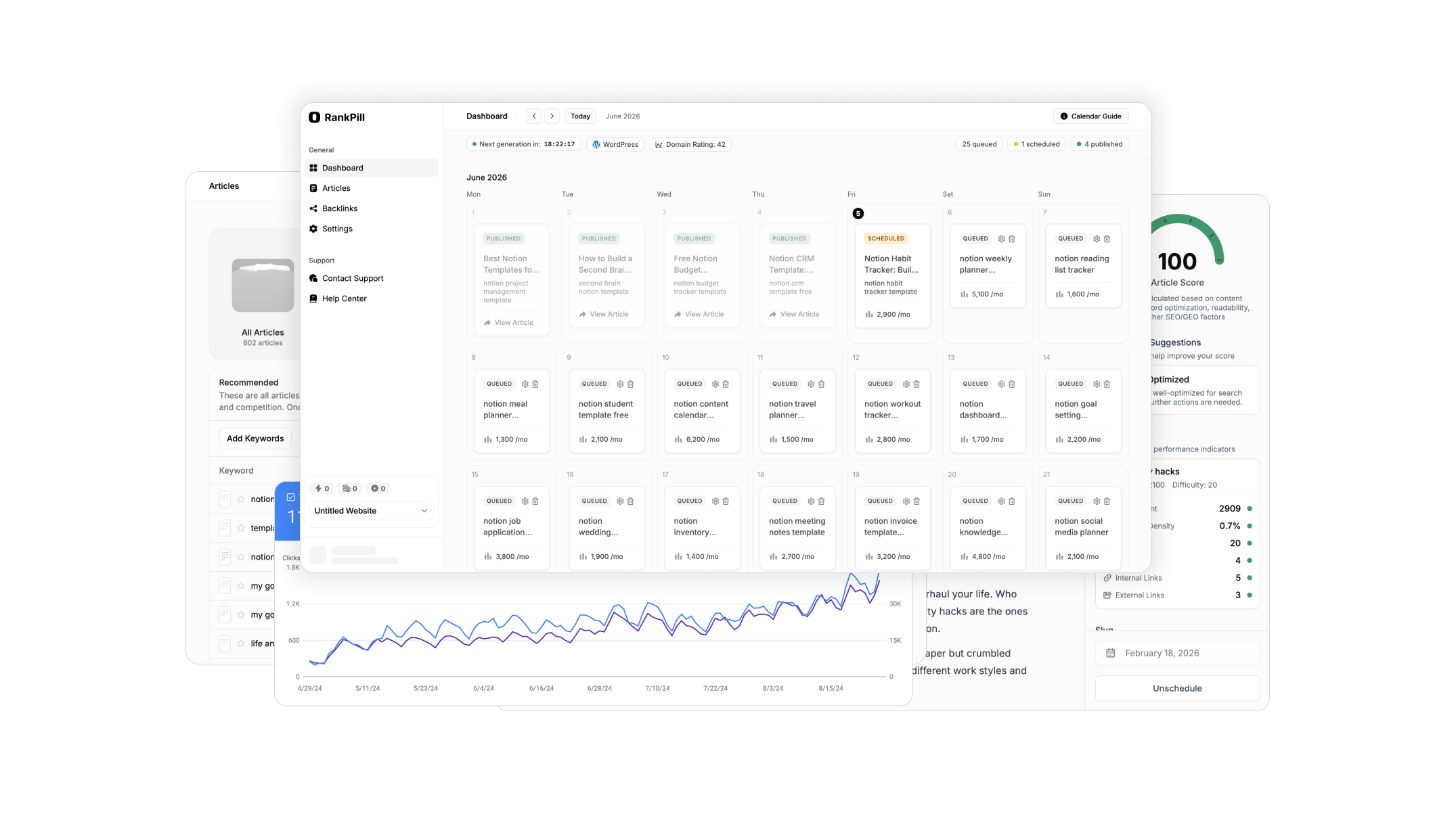

Want these on autopilot?

RankPill automates everything these tools do. Meta descriptions, titles, content briefs, and full articles published to your site every day without lifting a finger.