XML Sitemap Validator

Validate your XML sitemap for syntax errors, broken URLs, and missing pages that could prevent proper Google indexing.

Sitemap Spec Cheatsheet

The rules this validator checks against - straight from sitemaps.org and Google's guidelines.

| Rule | Value | Notes |

|---|---|---|

| URL limit per sitemap | 50,000 | Split into multiple sitemaps + index if over. |

| File size (uncompressed) | 50MB | Compress with .gz if approaching the limit. |

| Encoding | UTF-8 | All content must be UTF-8 encoded. |

| loc format | Absolute URL | Must start with http:// or https://. Same domain as sitemap. |

| lastmod format | W3C datetime | YYYY, YYYY-MM, YYYY-MM-DD, or ISO-8601 with timezone. |

| changefreq values | always, hourly, daily, weekly, monthly, yearly, never | Ignored by Google. Optional and safe to omit. |

| priority range | 0.0–1.0 | Ignored by Google. Optional and safe to omit. |

| Protocol | Match canonical | Do not mix http:// and https:// URLs in one sitemap. |

What Is an XML Sitemap?

An XML sitemap is a machine-readable list of the URLs on your site that you want search engines to discover and index. It uses the sitemaps.org XML protocol (adopted by Google, Bing, and Yandex) and is served at a stable URL, most commonly /sitemap.xml. For large sites, a sitemap index file can reference up to 50,000 child sitemaps, each of which can list up to 50,000 URLs.

A well-formed sitemap does two things at once: it surfaces pages that your internal linking would otherwise bury, and it gives search engines an accurate lastmod hint so they know which pages are actually worth re-crawling. A malformed sitemap does neither - worse, a sitemap that lists broken, noindexed, or redirect URLs actively wastes your crawl budget. This validator surfaces the exact issues Google logs silently in Search Console so you can fix them before your next index refresh.

Keep lastmod accurate

Only update lastmod when the content meaningfully changed. Lying here trains Google to ignore the signal across your entire site.

List only indexable URLs

Your sitemap should never include noindex, canonicalized, or redirected URLs. If a URL shouldn't be in Google's index, it shouldn't be in your sitemap either.

Reference it from robots.txt

Add a 'Sitemap: https://yourdomain.com/sitemap.xml' line to robots.txt. It's the primary way crawlers discover your sitemap without manual submission.

Submit to Search Console

Even with the robots.txt reference, submit the sitemap directly in Google Search Console. It unlocks the coverage and indexing reports that surface why specific URLs fail to index.

Frequently Asked Questions

Common questions about XML sitemaps and validation.

Related Tools

More free analysis tools

Canonical Tag Checker

Check any URL for canonical tag issues that cause duplicate content problems and dilute your page's search ranking signals.

Keyword Density Checker

Measure keyword frequency in any content and identify over-optimization or under-usage before publishing to search engines.

Open Graph Checker

Inspect any URL's Open Graph tags, meta description, and Twitter Card data to verify correct social previews and SEO output.

Heading Tag Checker

Audit the H1 through H6 heading structure on any webpage to find hierarchy issues, duplicate H1s, and missing tags.

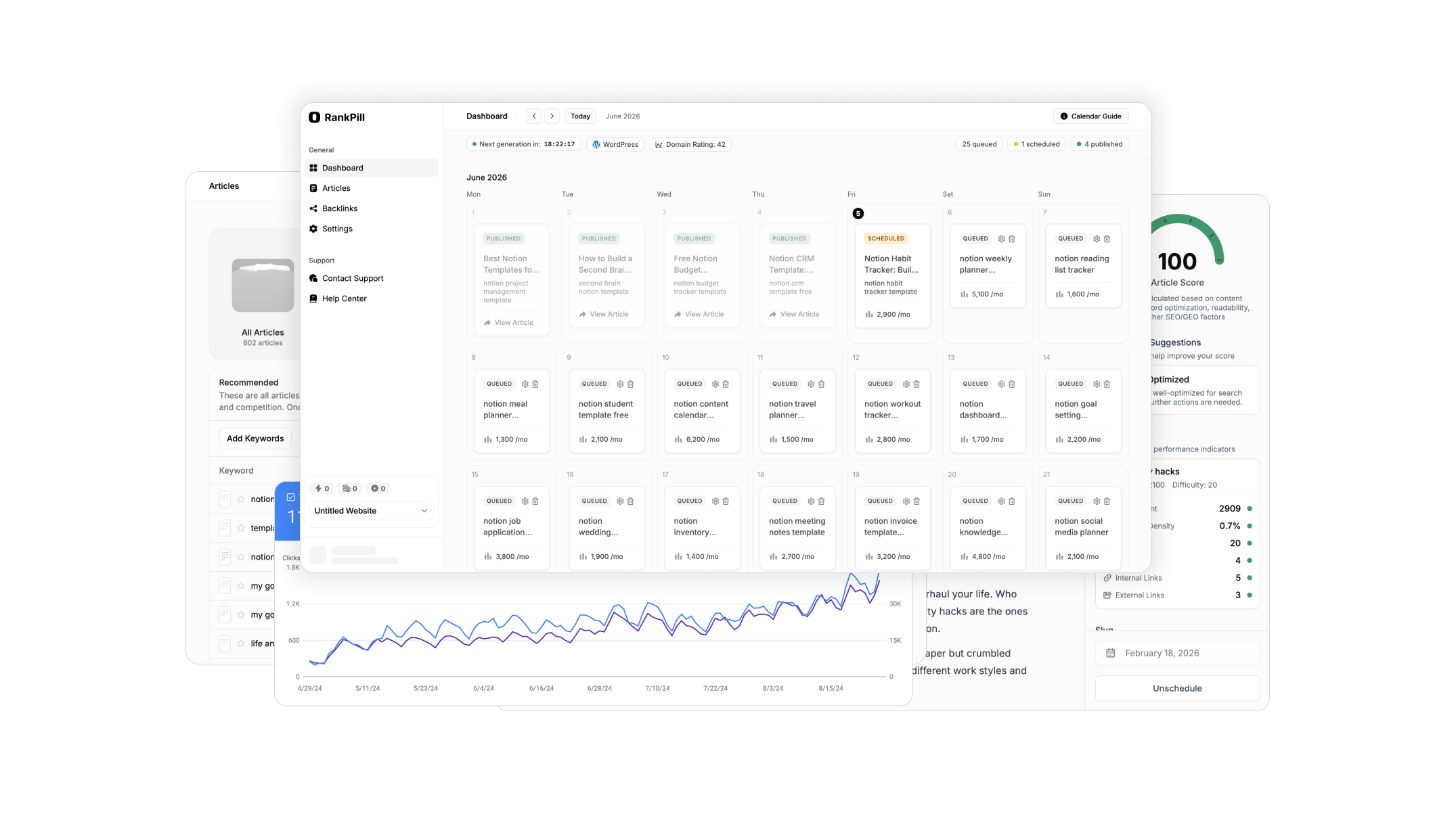

Want these on autopilot?

RankPill automates everything these tools do. Meta descriptions, titles, content briefs, and full articles published to your site every day without lifting a finger.